Often people who are not familiar with the nature and limitations of statistical methods tend to expect too much of the rating system. Ratings provide merely a comparison of performances, no more and no less. The measurement of the performance of an individual is always made relative to the performance of his competitors and both the performance of the player and of his opponents are subject to much the same random fluctuations. The measurement of the rating of an individual might well be compared with the measurement of the position of a cork bobbing up and down on the surface of agitated water with a yard stick tied to a rope and which is swaying in the wind.Arpad Elo in Chess Life, 1962

In theory, there is no difference between theory and practice; In practice, there is.Chuck Reid

- Fixing the BCS

- Althugh changing no one component will do the job, changing the formula can address the systemic issues.

- Fixing the BCS Not (Part Two)

- The BCS use of the computers eliminates their most attractive attribute - consistency, and has other flaws.

- Fixing the BCS Not (Part One)

- The human polls are the biggest problem with the current formula. There's a way to address the biggest part of their problem with respect to the BCS.

- The 2004 BCS

- Not as bad as some claim, but still covered in maggots. The NCAA should not condone a system that encourages gambling and discourages sportsmanship.

- My Daddy Can Beat Up Your Daddy!

- A look at the different ways fans mistakenly compare teams by comparing the conferences they play in.

- The BCS blew it again

- For the 2004 season, the BCS radically adjusted the BCS formula in an attempt to make the AP poll winner the same as the BCS winner. Whether this was a good idea or not is subject to debate, but the way they went about only shows that they as little about ranking systems as they do about football and public opinion.

- Basic SOS November 2, 2004

- A Strength of Schedule definition that makes sense for formula-based systems.

-

Standings

- College Football Rankings comparison

- An invaluable resource compiled by Kenneth Massey. A different view of the same data is Summary of 98 Computer Rankings

For more information, see About the Ratings.

| Week 7 | Composite | adjusted Winning Percentage | Performance Against PWP | Iterative Strength of Victory | |

| Week 8 | Composite | aWP | PA-PWP | ISOV | |

| Week 9 | Composite | aWP | PA-PWP | ISOV | |

| Week 10 | Composite | aWP | PA-PWP | ISOV | |

| Week 11 | Composite | aWP | PA-PWP | ISOV | |

| Week 12 | Composite | aWP | PA-PWP | ISOV | |

| Week 13 | Composite | aWP | PA-PWP | ISOV | PA-ISOV |

| Week 14 | Composite | aWP | PA-PWP | ISOV | PA-ISOV |

| Jan 5 | Composite | aWP | PA-PWP | ISOV | PA-ISOV |

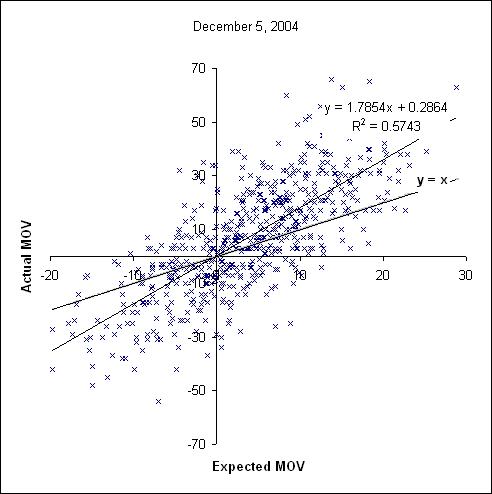

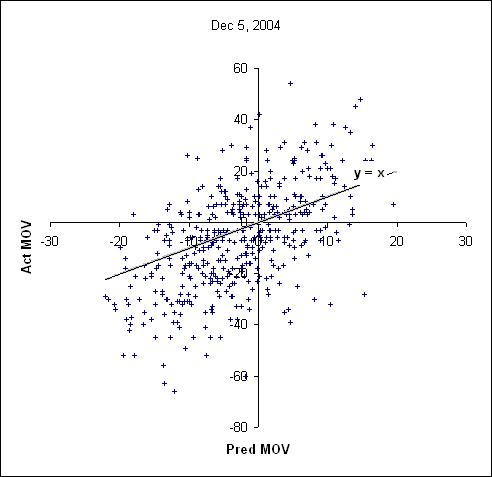

Actual versus Predicted MOV

|

Where Predicted MOV (X) and Actual MOV (Y) are both positive or both negative, the method correctly predicted the winner. When Pred is negative but Act positive, it predicted a win by the home team but the visitor won. When Pred is positive and Act negative, the visitor was predicted to win but lost. Points between the line Y=X and the X-axis represent games where the winner was picked correctly, but by an excessive margin of victory.

For the retrodictive analysis, home and visiting teams are reversed. The sign of the expected MOV matched the game results 80.7 percent of the time (503-120).